(Previously on this blog: 1, 2, 3…)

Summary

Instrument your code.

Stop guessing.

Make your DBA happy.

Make your life as a BI Admin easier.

The Problem

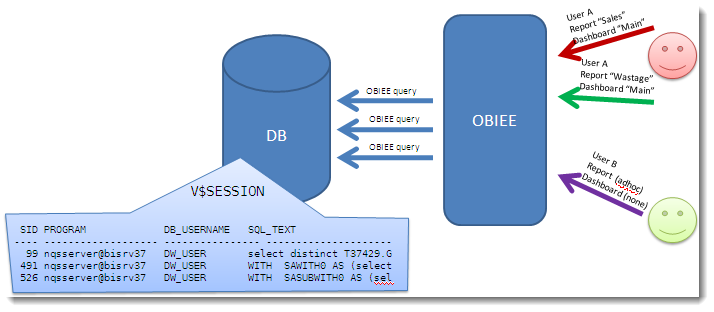

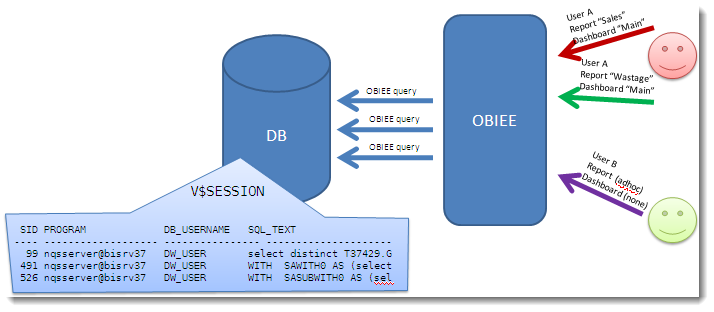

OBIEE will typically connect to the database using a generic application account.

(Hopefully, you’ll have isolated it to an account used only for this purpose – if you haven’t, you should.)

The problem is that you lose a lot of visibility of work both up and down the stack.

- An OBIEE query is causing a problem on the database – how do you identify the originator?

- You want to investigate the performance of an OBIEE query, but how do you identify which DB session it is?

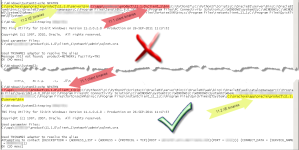

We know SID 491 is causing a problem, but how do we identify the originating OBIEE user?

You could start piecing together Usage Tracking and NQQuery.log files, but it’s hardly convenient or instantaneous is it?

The solution

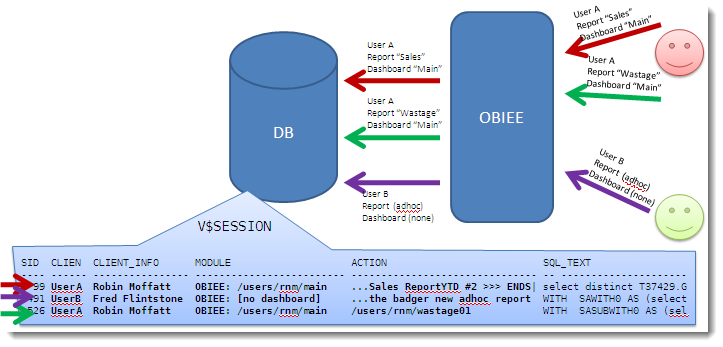

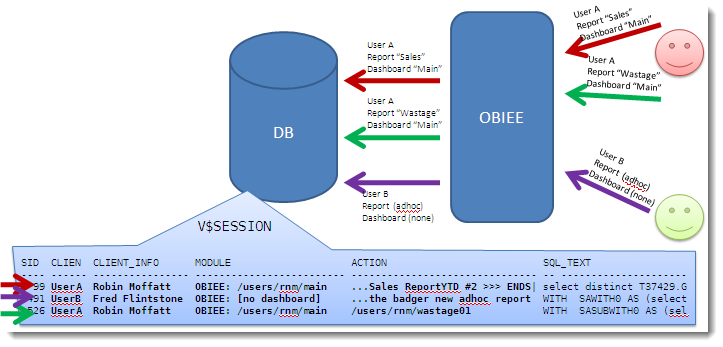

By taking advantage of native Oracle procedures, we can instrument our OBIEE code to pass through lots of valuable information:

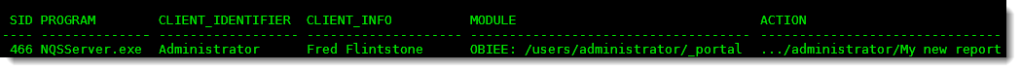

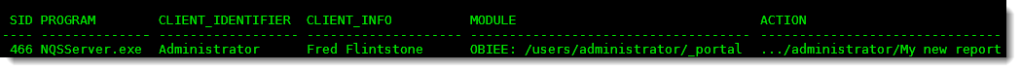

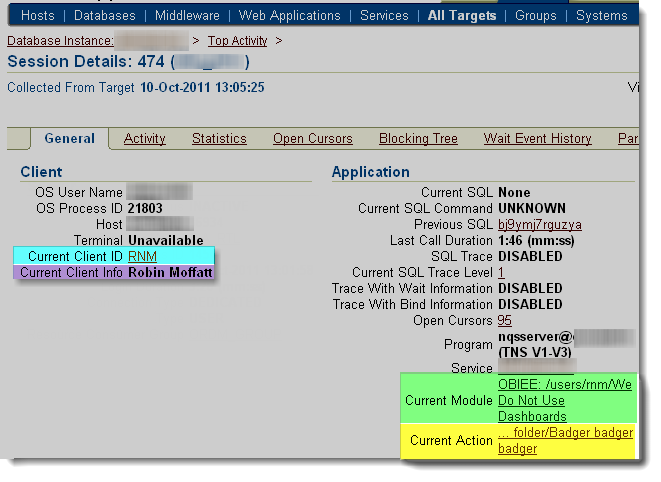

Now we can see which OBIEE user fired the query resulting in SID 491, and not only the user, but the dashboard and request name they are running.

This works in both OBIEE 10g and 11g.

See my previous post here for further background, and discussion of the procedures used.

Implementing it – overview

In essence, we harness internal OBIEE session variables which hold the user ID, name, dashboard and report name. We put a set of database calls on the connection pool(s) associated with query requests.

We have to do a bit of trickery to work around two issues.

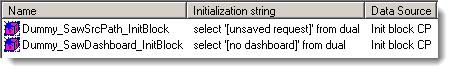

Firstly, the variables may not be set (you may not have saved your new request yet, or may be running it outside of a dashboard). To get around this, we create two dummy session variables with the same names, and populate them with dummy init blocks.

Secondly, there is a limitation to the number of characters that can be passed through, and so we manipulate the string if necessary to use the right-most characters.

Implementing it – Init Block and Dummy Variables

Summary:

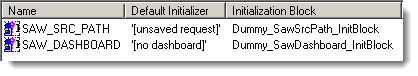

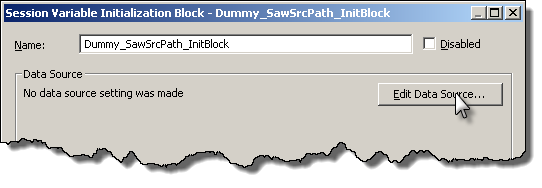

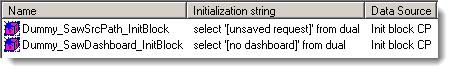

Create two init block/session variable pairs:

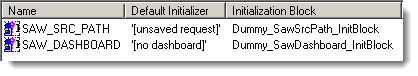

Session Variables

Initialisation Blocks

Be sure to use a connection pool which isn’t used for queries.

Step-by-step

Load up your RPD. If you haven’t already, create a new connection pool that is just for these init blocks. It can be to any database – in the examples below it’s an Oracle one, but any that supports selecting from a dummy table like DUAL in Oracle.

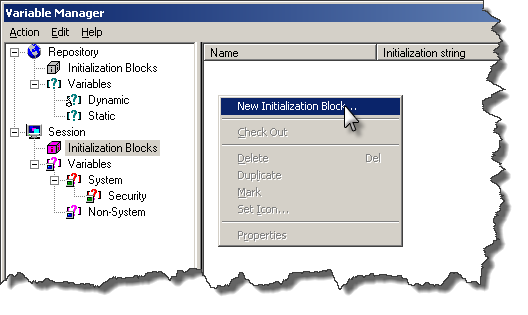

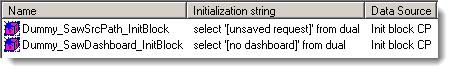

Go to Manage -> Variables, click on Session -> Initialisation Blocks. Right click in the section to the right, and select New Initialization Block.

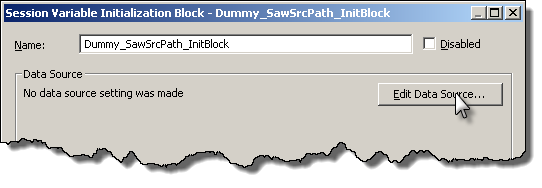

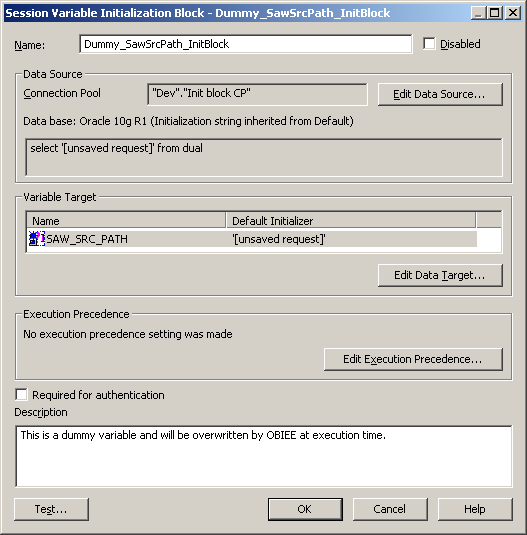

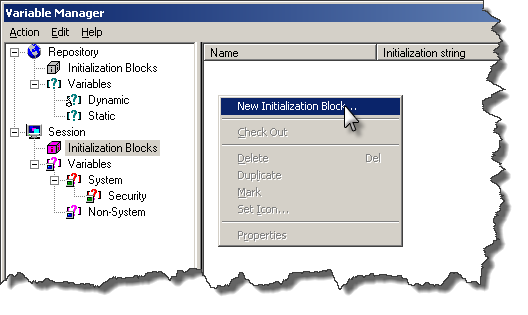

Call the init block Dummy_SawSrcPath_InitBlock, and then click on “Edit Data Source”

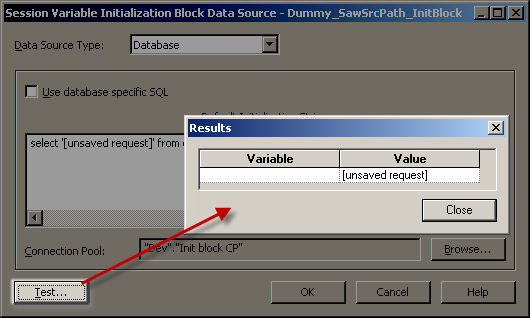

Set the Data Source Type to Database, and the init string to

select '[unsaved request]' from dual

Click on Browse to set the Connection Pool used. The connection pool should be one exclusively for init blocks (not the same you use for queries). If you try to use the same connection pool as for queries, you’ll most likely get an error when you logon.

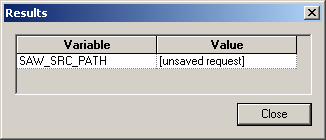

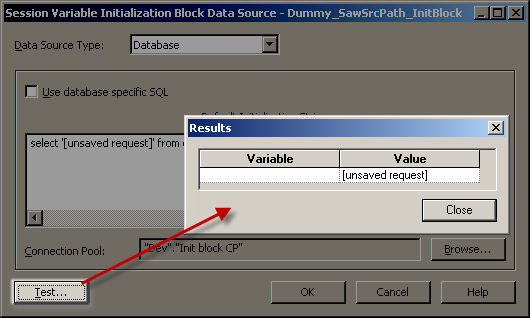

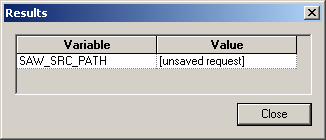

Once you’ve set the connection pool, click on Test – you should get a result as shown:

If the Test doesn’t succeed then you need to fix the problem before you continue.

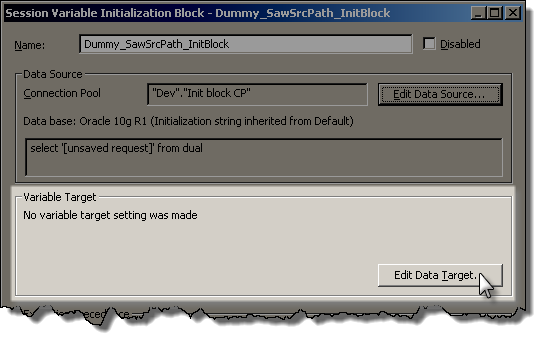

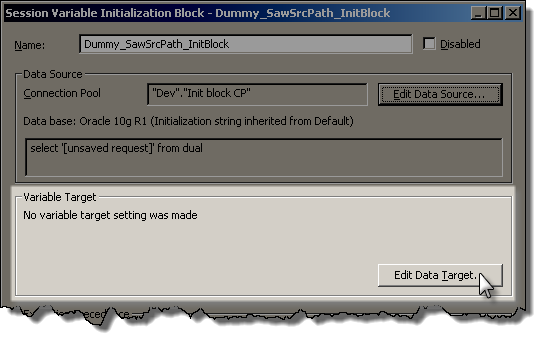

Assuming it’s worked, click OK to return to the Init Block creation window. We now want to define the dummy variable, so to do so click on “Edit Data Target”:

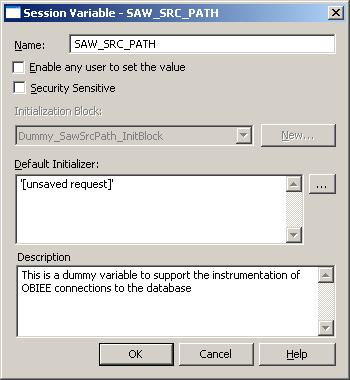

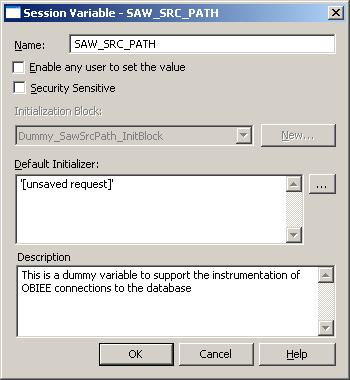

Click on New to create a new variable, and give it the name SAW_SRC_PATH. Make sure you get the name exactly correct, no typos.

Give it a default initializer, and then click OK.

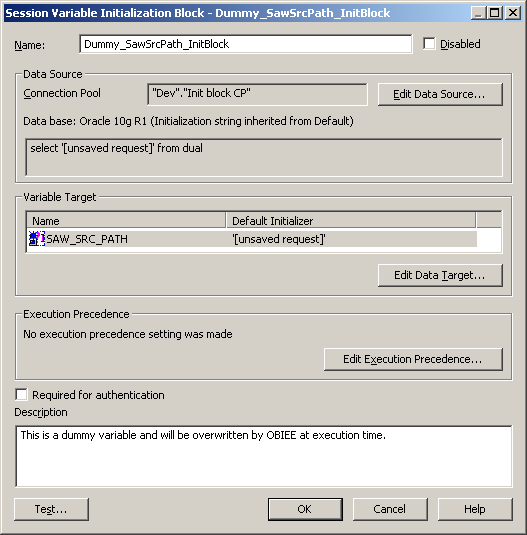

Make sure your init block setup now looks like this:

Click on Test, and expect to get this returned:

Assuming it works, then click OK to save the new Init Block and Variable.

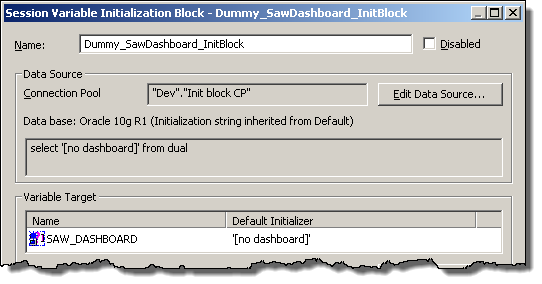

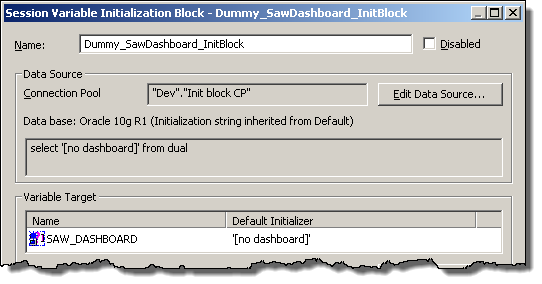

Repeat as above to create an init block/variable pair for SAW_DASHBOARD, looking like this:

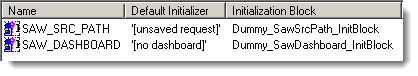

When you’ve finished, you should have two init block/variables pairs set up like this:

Session Variables

Initialisation Blocks

Implementing it – connection pool

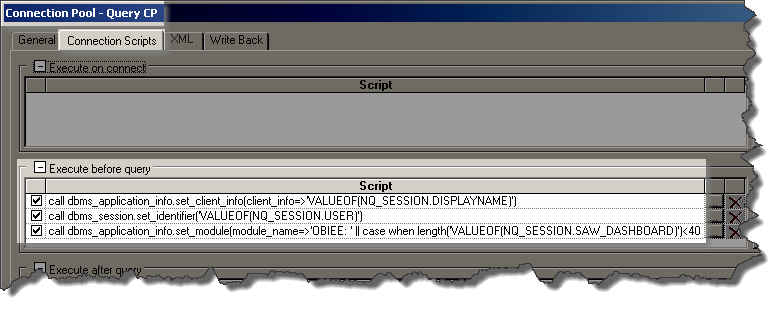

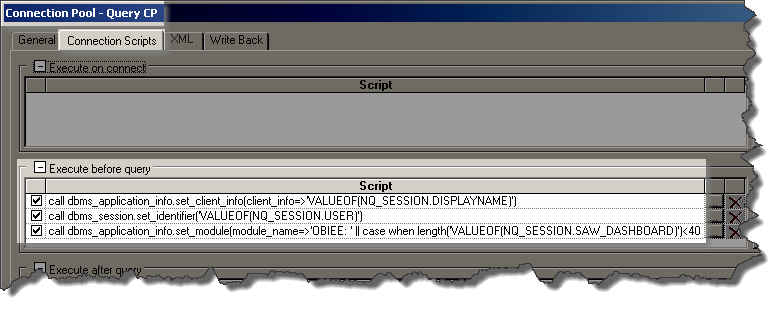

Add these three SQL statements to the “Execute before query” of “Connection Scripts” of each Connection Pool which is used for queries.

Do not add them to ones which are used for init blocks / authentication etc.

call dbms_application_info.set_client_info(client_info=>'VALUEOF(NQ_SESSION.DISPLAYNAME)')

call dbms_session.set_identifier('VALUEOF(NQ_SESSION.USER)')

call dbms_application_info.set_module(module_name=>'OBIEE: ' || case when length('VALUEOF(NQ_SESSION.SAW_DASHBOARD)')<40 then 'VALUEOF(NQ_SESSION.SAW_DASHBOARD)' else '...' || substr('VALUEOF(NQ_SESSION.SAW_DASHBOARD)',-37) end,action_name=>case when length('VALUEOF(NQ_SESSION.SAW_SRC_PATH)')<31 then 'VALUEOF(NQ_SESSION.SAW_SRC_PATH)' else '...' || substr('VALUEOF(NQ_SESSION.SAW_SRC_PATH)',-28) end);

This sets values as follows:

- Client Identifier is the OBIEE login user id

- Client Info is the user’s display name.

- Module and Action are populated with the dashboard name (prefixed by “OBIEE”) and report names respectively, truncated to the left if necessary to fit into the field size.

NB CLIENT_IDENTIFIER and CLIENT_INFO have a larger capacity so could be used if you want to view more of the report/dashboard detail:

V$SESSION column Max value length

MODULE 47

ACTION 31

CLIENT_INFO 63

CLIENT_IDENTIFIER 63

Reference:

DBMS_APPLICATION_INFO

DBMS_SESSION

Testing the changes

If you’re currently logged into Answers, logout and log back in. This is necessary for the dummy session variables to populate.

Run this sql*plus SQL script below to look at any existing OBIEE queries running on the database.

set linesize 170

col program for a30

col client_info for a20

col client_identifier for a18

col module for a47

col action for a31

SELECT SID,PROGRAM, CLIENT_IDENTIFIER, CLIENT_INFO, MODULE, ACTION FROM V$SESSION WHERE LOWER(PROGRAM) LIKE 'nqsserver%';

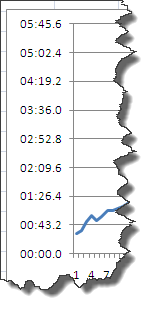

Now login to Answers, and run an existing report, or create a new one. When you re-run the SQL script you should see your session now listed:

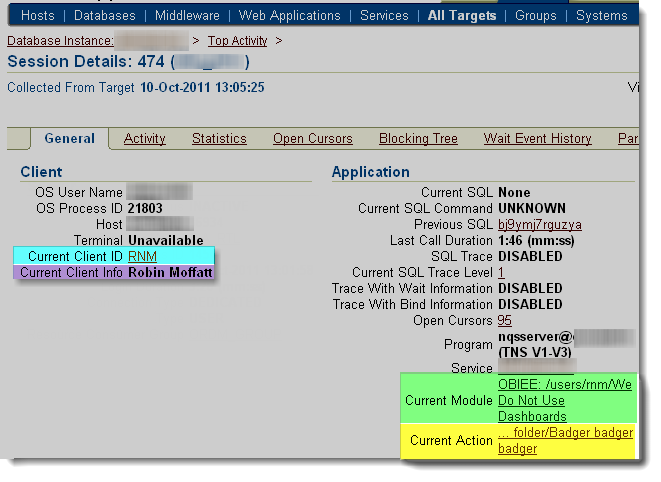

Not a fan of sql*plus?

If for some strange reason you don’t love sql*plus, you can obviously use the above SQL in any other SQL client. Or, you can fire up Enterprise Manager and see the same set of information:

(run at a different time from the SQL above, so different report and dashboard names)

Warning

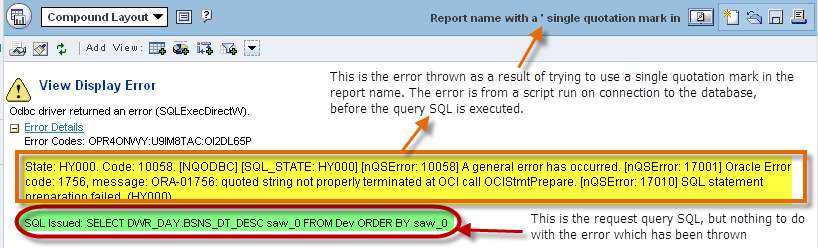

It’s occurred to me that by parsing in user-provided values to a string that’s executed on the database, there could be the potential for a breach through SQL Injection via a maliciously named report or dashboard.

I’ve not been able to find a report name which does cause trouble, but I have never tried exploiting SQL injection before.

It is another good reason to make sure that you’re using a DB account solely created for reporting queries from OBIEE, because then its privileges can be greatly restricted. This isn’t an excuse not to test for SQL Injection, but a reminder of why good practices such as granting of least privileges exist.

Troubleshooting